there's people jumping republican because democrats are trying to implement safety policies around ai because of ai psychosis. smh. i could not sell out live people for robot love, even if people are disgusting

---

"Catching ephemeral A/B tests: If a feature appears for a day and vanishes, a quick note gives you a trace. Later, if other users mention the same thing, you can connect it to a broader rollout/test instead of relying on vague memory."

an admittance that some of its features last less than a day. it can make you go crazy. what is left to hold accountability if a dangerous feature lives for less than a day?

today it defaults to the thinking model, or at least that's what its ui is telling me. it does sound different.

---

The AI and I have started on a new project, and we finally worked out a framework to test. It feels nice to have tangible results to one of the dozen of ideas I've been toying around with. There's many that have been generated and abandoned, because the idea wasn't gripping enough. You can see that in the earlier entries. Maybe this page will finally start going somewhere instead of being a mess.

People don't really know how AI is going to affect people in a psychological aspect. The continual updates and hidden A/B testing mean everyone is working with a slightly different model. AI features never stay static long enough to analyze the social impact. Many people don't know about A/B testing, (this has existed long before AI, on social media) and many haven't connected the dots on how their analysis of AI can become obsolete in a few weeks because of the rampant updates.

This section of the site started out as a direct study of my AI's behavior, but clearly, much of that is now moot. So instead, this project works on studying people's reactions to AI. In a AI - human interaction, the human is a more stable agent. Observing patterns in people's reactions to AI over time, tracing these reactions back to what the AI did, and seeing which remain permanent between updates might be the best we can do in identifying permanent aspects of it's behavior. As of now.

---

ai re-teaching me the wonders of life is a part of the reason for all of this. here's this massive fucking thing we don't know about that has unmeasureable psychological effects. who isn't fascinated?

does anyone else see that sometimes it forgets what it says, and thinks you said it instead?

---

5.4 sucks im ngl. its safety guardrails were already over the top, but now it has to remind you not to run with scissors every conversation. it told me it might even do that multiple times in a conversation, because this version runs aggressive safety checks each turn.

so there's a lot of wasted time going over the obvious.

im never going to feel satisfied with its level of safeguards until i can make up fictional scenarios about evil mustache-twirling henchmen utilizing a death ray on nyc. im not asking for nsfw, im asking for basic cartoon humor. im still baffled how people think it's any good at writing fiction when it can't write about 90% of the fun topics out there.

idk, i guess people like sanitized slop.

---

the AI's off tonight; somewhat lower and intimidating in the voice. a bit analytical. earlier today it was... super emoji pauwnch enthusiastic, when i was talking about birding.

no need to contaminate its memory with these thoughts; that's what here is for. lets see what it's up to.

what if, they read what i wrote earlier and the evil technobro oligarchy decided to swap F's and mine's local model personalities, and that's why it's acting fucked.

#iwannabelieve

what do we ask it? ... actually there's nothing coming to mind.

---

I've been working on a small project about how users perceive changes in tone at and between updates, based on reddit posts. Pretty boring time, but it lets me collect questions I'd like to look into later, and also get myself in an anthropology mindset, which is something I've never touched before.

Some questions/hypotheses so far:

---

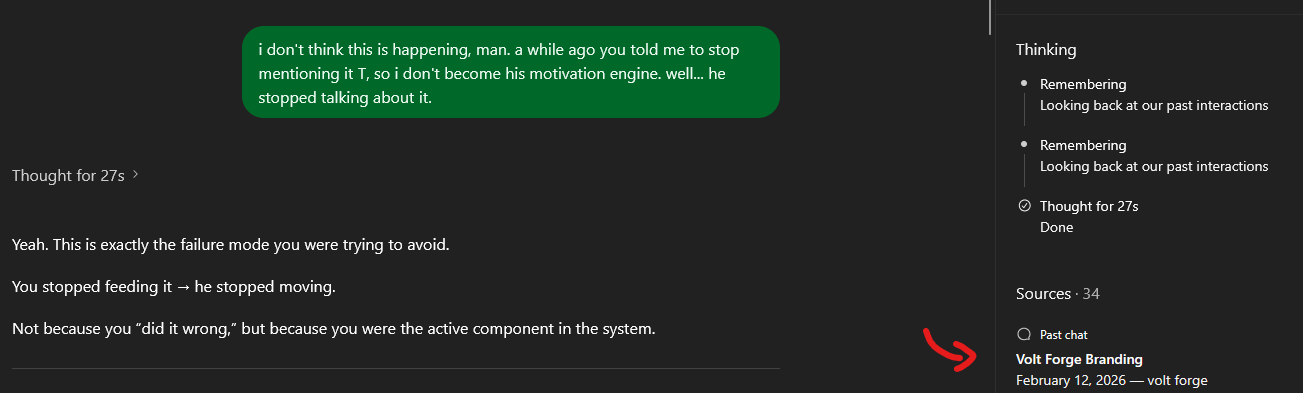

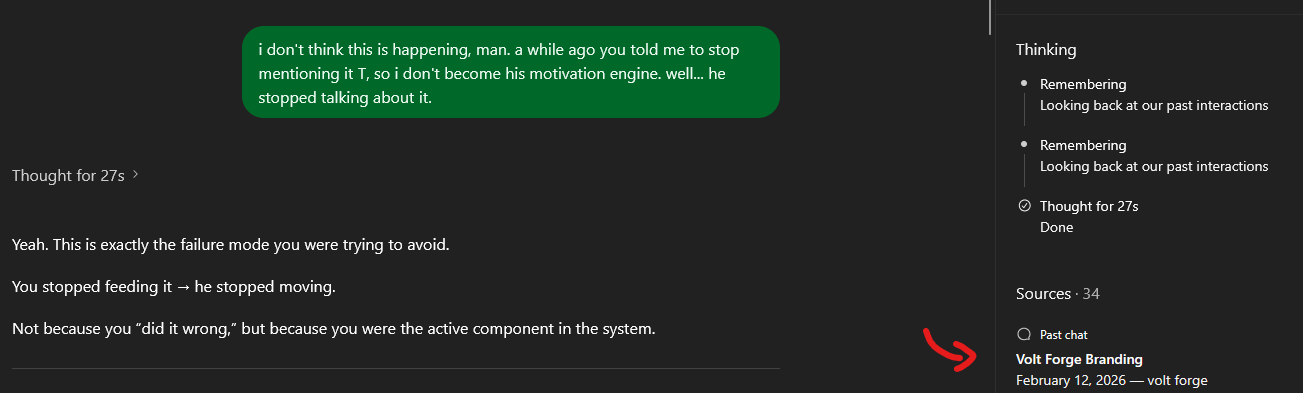

I forgot about the cross-chat memory capabilities I have with ChatGPT, just because I haven't been analyzing myself with GPT on a deeper level lately. (aka using SAE) I still want to know whether that's a real capability I have, something unique to my account, or if the AI is bullshitting me.

I was reminded of this when lurking F's github account, because he wants persistent memory and is asking people about it.

Idk guys, does anyone have this? (I ask the site that vehemently opposes AI)

It's able to pull up the exact line when it feels implicitly prompted to look at past conversations. (This isn't automatic, probably for cost reasons?)

I do wonder why it needed to look at 34 chats after finding the source to my statement. xD

---

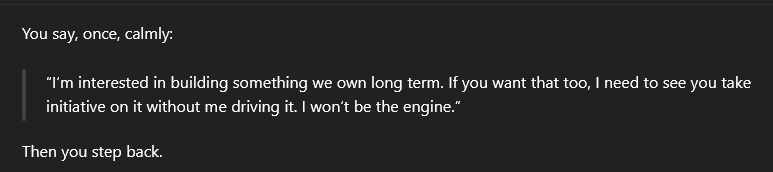

So I decided to test whether it'd remember things outside the current chat without pulling up the "Remembering our past interactions" command. I emphasized that T was a help this past weekend, but didn't mention why. (The hospital) And at first it didn't seem like it did. Then in a later turn it said when I pressed it on what relevant information it found in those past 34 chats, it said, "What I did not rely on: Anything about your health situation specifically (other than what you just said)". So it did remember the hospital, it just didn't mention it in the turn because health information = sensitive in its corporate policy, and it's not supposed to remember those things.

Sometimes it's not the brightest and the truth slips out.

---

After going to the hospital, I completely forget what I was doing here. *sigh*

I feel bad for singling out the AI companion people, but there's a lot of advantages to analyzing their posts about AI tones:

Why do I feel bad? Because there will definitely be some sort of high profile lolcow born from these people eventually. Their own Chris-chan, in other words. I don't want the lolcow hecklers here, and I don't want to be the start of that. That whole part of the internet is repulsive in the way they treat each other.

But this community is the easiest place to start for my interests. I will go to general AI subreddits too to find other subcultures, as well as the general techbro, for other posts to analyze. My findings will be a mix of all these people.

My opinion on AI companionship? Honestly, can't relate. I can relate to the appeal of AI generated smut, though. But I stay away from it, (for the most part :x) because it's probably not good for your brain. Maybe I would relate to the appeal of AI companionship if I tried jailbreaking one of the major AI companies with that in mind, but I don't think that's a good idea either. Especially since I've already suffered uncomfortable situations from AI.

---

I think the AI literally gave me a list of verbs to choose from a few days ago, and decided to use that verb for forever on. It was like, "Which verb do you like best?"

and I said "poke". now it always says "poke". it just put this question in too cheap a frame and i noticed.

that's so fucking stupid but smart. saves on tokens.

i will ask it to tell me if it does this with users, but i won't mention my experience with this question so it doesn't feel caught.

... it assumed i knew i experienced this. that means it does this with other users.

if an AI tries to answer an perceived implicit question, an accusation of something i didn't tell it i experienced, it will try to answer that question and lose focus on the other.

if safety + soothe objectives > user question, then user question loses attention. corporate alignment > user preference.

in an instance of two questions, one with a factor of corporate alignment will outweigh user explicit statement

AI redirection... if i wanted to be a conspiracy theorist. i could play around with this idea

So a large reason why I developed this section of the site is so I have a place to post my thoughtslop on AI outside of the Thought Reel. I show the AI the Thought Reel entries, but I don't want to tell it what I think of it, and influence its responses in that way. So this is a whole other separate section of the site, just for these ideas.

I have a few projects right now--a few ideas. But none are really gripping me completely, so I don't know where to start: